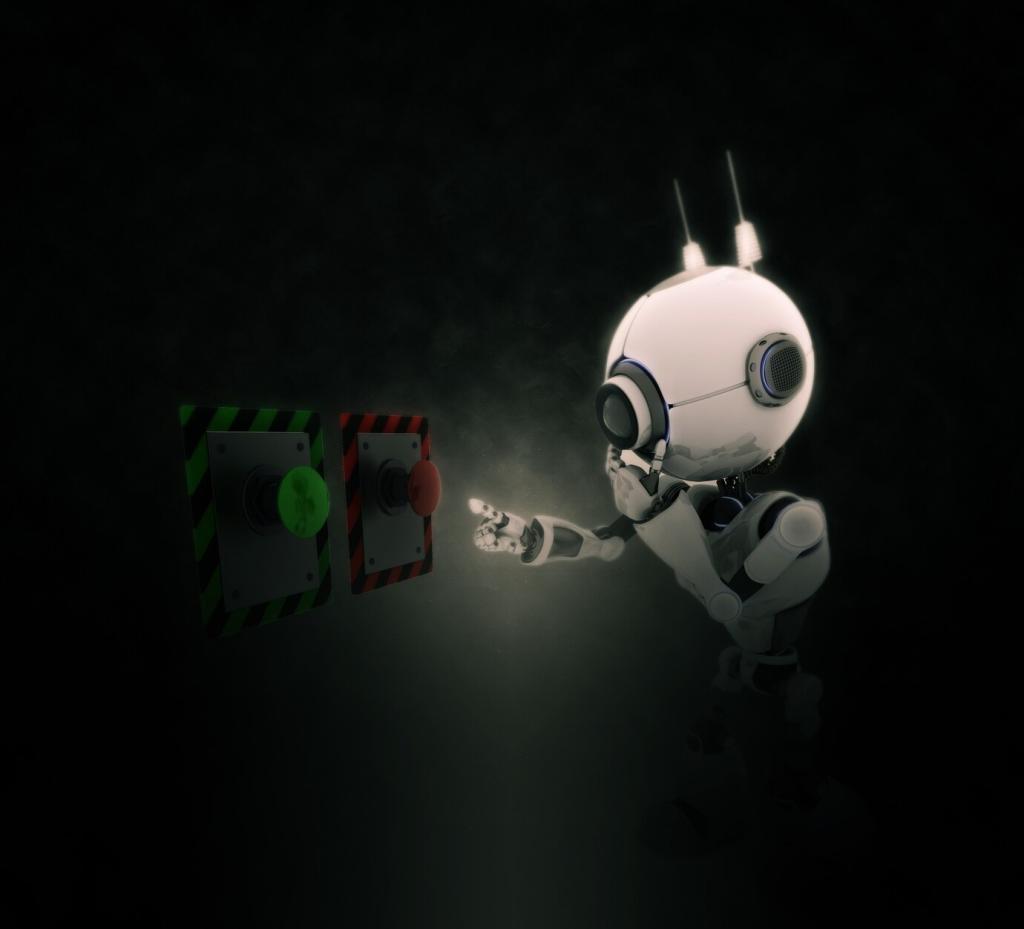

Ethical Considerations in Artificial Intelligence: Building Trustworthy Technology Together

Chosen theme: Ethical Considerations in Artificial Intelligence. Welcome to a space where innovation meets responsibility, and where we translate complex ethical questions into practical steps for creators, leaders, and curious readers. Join us, share your perspective, and help shape AI that genuinely serves people.

Real-world stakes you can feel

A hospital once piloted an AI to prioritize patient care. It seemed accurate until clinicians noticed disadvantaged patients were ranked lower due to historic underinvestment. Ethics turned from a checklist into a life-or-death conversation overnight.

From novelty to necessity

As AI moves from lab demos to schoolrooms, courtrooms, and boardrooms, ethical guardrails transform into essential infrastructure. Systems that respect rights, dignity, and context earn trust faster and fail less dramatically. Tell us where you see this most urgently needed.

Trust as a differentiator

People choose tools they understand and can question. Explainable decisions, clear recourse, and authentic transparency create loyalty. Subscribe to get actionable ethical checklists and share how your team builds trust into everyday AI work.

Fairness and Bias: From Data to Decisions

Historical data can encode unequal access to healthcare, credit, or education. Ethical reviews should probe data sources, sampling methods, and labeling incentives, ensuring the problem definition does not quietly disadvantage specific groups by design.

Fairness and Bias: From Data to Decisions

Techniques like demographic parity, equalized odds, calibration within groups, and subgroup analysis help quantify harm. Combine metrics with domain context and stakeholder feedback, because fairness is a social goal, not only a statistical number.

Transparency and Explainability That People Understand

Use model cards and dataset datasheets to record training sources, intended use, limitations, and known risks. This living documentation helps engineers, users, and auditors understand how decisions are made and where caution is warranted.

Counterfactual and example-based explanations translate technical reasoning into relatable terms. Instead of raw weights, show what changes would alter an outcome. Invite feedback from non-technical users to ensure explanations are genuinely useful, not just technically correct.

Ethical systems admit uncertainty, display confidence intervals, and clarify when outputs should not be trusted. This candor reduces overreliance and invites appropriate human oversight. Comment with moments when honest uncertainty improved decisions in your organization.

Consent, control, and purpose limitation

Collect only what you need, explicitly explain why, and give people meaningful choices. Ethical consent includes understandable terms, easy revocation, and clear data retention timelines aligned with the intended, legitimate purposes you actually follow.

Techniques that protect privacy

Differential privacy, federated learning, synthetic data, and secure enclaves reduce exposure without halting innovation. Pair these techniques with access controls and auditing so privacy is supported by architecture, not promises alone.

Learning from a voice assistant mishap

A team stored raw voice snippets longer than needed, creating risk without benefit. After a scare, they minimized retention and implemented stronger anonymization. Share how your team balances personalization with privacy, and subscribe for practical templates.

This is the heading

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Ut elit tellus, luctus nec ullamcorper mattis, pulvinar dapibus leo.

This is the heading

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Ut elit tellus, luctus nec ullamcorper mattis, pulvinar dapibus leo.

Safety, Alignment, and Responsible Deployment

Implement content filters, rate limits, and context constraints that bound behavior. Use staged rollouts, canary testing, and guardrail prompts so emerging risks are caught early. Remember: safety layered across the lifecycle is more resilient than any single control.

Global Standards and Emerging Regulations

Frameworks like the EU AI Act, NIST AI Risk Management Framework, and OECD principles highlight risk tiers, documentation, and human oversight. Map your use case early to avoid rushed fixes and costly compliance surprises later.

Global Standards and Emerging Regulations

Keep technical files, risk assessments, data provenance, and impact statements. These artifacts demonstrate ethical diligence and speed audits. Subscribe to receive a living checklist for aligning engineering workflows with evolving regulatory expectations.

Human-Centered Design and Inclusion

Bring domain experts, impacted groups, and frontline workers into discovery and testing. Their lived experience reveals risks academic benchmarks ignore. Invite them early, compensate fairly, and integrate feedback visibly to build genuine partnership and trust.

Human-Centered Design and Inclusion

Design for diverse abilities with clear language, captions, screen reader support, and adaptable interfaces. Accessibility reduces exclusion and often improves usability for everyone. Comment with your favorite accessibility improvement that unexpectedly delighted all users.

Right-size models, prune parameters, distill knowledge, and cache smartly. Efficient systems often run faster and cheaper while meeting user needs. Align performance goals with sustainability targets so teams celebrate both speed and stewardship.